Inside Bellevue School District’s AI Policy

In this post, I will briefly review the Bellevue School District’s AI Policy. It will be short and sweet, actually much like the policy itself.

If you prefer to see the policy for yourself, click on the link: 2022.2P: Acceptable Use of Artificial Intelligence (AI). Once you pull up the policy, a few things jump out.

For one, the policy was put in place on August 13, 2025. That’s almost as recent as it gets. And I think that raises some concerns, such as why it took so long to implement, and how difficult it actually is to navigate AI in schools.

The short answer to both those concerns is this: AI is complicated.

We should probably start by asking ourselves, “What is AI?”

What is AI?

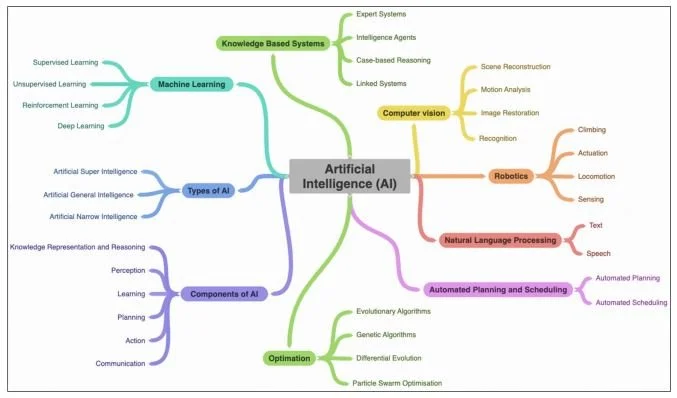

Note: From Figure 2. Components, types and subfield of AI (Regona et al., 2022)

Again, AI is complicated.

To start, artificial intelligence (AI) is exactly what it sounds like. It’s a type of technology that tries to simulate tasks by framing them through how we view ‘intelligence.’

This means problem-solving, comprehension, decision-making, and even ‘creativity.’

Believe it or not, the concept of AI goes as far back as the 1950s with Alan Turing.

But that’s enough about that. We actually don’t have to go too far back in time because the big question about AI and its ethical use became widely popularized in late 2022, when OpenAI released ChatGPT.

It’s this new specific type of AI within the broad field of AI known as “generative AI” that uses deep learning models to create new things based on the prompts we give it.

In many ways, this is why schools struggle with the new generative AI: it's new, complicated, easily accessible, and sometimes unregulated.

So, how does Bellevue School District handle the new hotness of AI?

Bellevue School District (BSD) AI Policy Principles

“Artificial intelligence (AI) is a powerful technology that can enhance learning, creativity, and problem-solving for students. However, AI also poses potential risks and challenges, such as ethical, social, and privacy issues. Therefore, it is important that students use AI in a responsible and respectful manner, following the guidelines and principles of this procedure.”

The truth is… BSD doesn’t really handle AI. This is not a jab at the district. In fact, most school districts still don’t have any policies on AI use in schools! It’s that there just isn’t much to the AI policy itself.

Here are the principles of BSD’s AI policy:

Respect: Students should respect the dignity, diversity, and rights of all human and non-human entities that are affected by AI. Students should not use AI to harm, harass, discriminate, or manipulate others or in ways that violate District policies and procedures.

Responsibility: Students should be responsible for their actions and decisions when using AI. Students should understand the purpose, function, and limitations of AI, and seek guidance from teachers or parents/guardians when in doubt. Students should not use AI for illegal, unethical, or inappropriate purposes or in ways that violate District policies and procedures.

Transparency: Students should be transparent and honest about their use of AI. Students should not misrepresent, deceive, or hide their use of AI or its data. Students should respect the intellectual property and privacy of others when using AI.

Accountability: Students should be accountable for the consequences and impacts of their use of AI. Students should report any problems, errors, or issues that arise from AI use to their teachers. Students should cooperate with any investigations or audits related to AI use.

Four principles. Respect how AI can affect others, be responsible for your AI use, be transparent about it, and be accountable for it.

It’s simple, but what’s the problem?

Debate about BSD’s AI Policy Principles

“Even still, bias in AI, whether it unjustly punishes us for our race, age, weight, gender, religion, disability status, or economic class, is not a computer problem. It’s a people problem.”

The most glaring problems with BSD’s AI policy come from the first two principles: respect and responsibility.

In the first principle, the policy states:

Students should not use AI to harm, harass, discriminate, or manipulate others or in ways that violate District policies and procedures.

Did you catch that?

The nature of the problem is that generative AI models are fed lots of data, and where does the data come from? Humans.

And what’s the difference between subjective humans trying to produce objective data and claiming them as facts? Sometimes, we get it wrong. Like, really wrong.

Why? Because humans are biased.

Shannon Vallor, an American philosopher of technology and professor at the University of Edinburgh, recently published her book The AI Mirror: How to Reclaim Our Humanity in an Age of Machine Thinking in 2024.

Vallor points out three big problems with AI: (1) the models learn from biased data, which can end up reinforcing social injustices, (2) depending on AI too much can “deskill” us, and (3) they are supposed to “bullcrap” us when it doesn’t know the answer.

So, what’s the connection between that and students using AI in BSD? The problem is that these large-language AI models are already designed to harm, discriminate, and manipulate users.

“However, in none of these [AI] systems can the average user — or, in some cases, even the system’s regulators, programmers, and administrators — grasp precisely how the decision process is being carried out or what salient factors are driving the algorithm’s results.”

Here’s another issue with BSD’s second principle of their AI policy:

Students should understand the purpose, function, and limitations of AI, and seek guidance from teachers or parents/guardians when in doubt.

There are two compounding issues around this policy: (1) most people (let alone teachers, parents, and guardians) don’t really know much about the ethical issues or how AI models actually work, which means they can’t actually provide guidance, which leads to (2) students specifically failing to understand the limitations of AI.

Vallor points out that the large AI behemoths like OpenAI, Microsoft, Google DeepMind, and Meta largely hide how their AI models are trained from the public.

Even their programmers have difficulty understanding how their models arrive at certain conclusions.

So, if programmers struggle to grasp how their models work, how would others like us outside of the field know any more than their makers?

This raises really important ethical questions about not knowing exactly what goes into training these models, and education stakeholders can’t just ignore them.

Let’s not forget about privacy and data security issues that could leak from potential data breaches (“How Artificial Intelligence in Education Is Transforming Classrooms,” 2025)

With that said, you can understand why this principle is deeply flawed.

How Does This Impact You?

“As protections are developed, we recommend that policies center people, not machines. ”

Let’s be real. AI is here to stay.

The problem with BSD’s AI policy is that insufficient oversight or understanding of AI can affect our students.

The AI policy will require more than preventing students from using AI to harm others; it also requires preventing them from harming themselves.

The students will need guidance from responsible adults. But if teachers, parents, or guardians don’t understand the ethical issues with AI, they need to be informed.

Teachers, leaders, parents, guardians, students, and other community members need to know that AI models can be flawed because the data they are fed already contains flaws.

As more and more people hop on the generative AI train, it will slowly change the way we think. Don’t believe me? Think of how social media has changed the way we see the world.

Now that generative AI is here to stay, we have to be more vigilant than what BSD’s AI policy provides.

It begins with a basic understanding of how AI works.

More importantly, we need to make sure we understand the benefits and challenges of AI from credible sources. In fact, below are some links that might help you be more informed.

https://www.brookings.edu/articles/ais-future-for-students-is-in-our-hands/

https://learningsciences.smu.edu/blog/artificial-intelligence-in-education

https://www.gse.harvard.edu/ideas/news/25/04/ai-can-add-not-just-subtract-learning

Some of the benefits include solving very complex problems efficiently, using AI tools for people with cognitive needs, reducing long, mundane tasks for teachers, inspiring creativity, and augmenting our human abilities.

The most important thing is that we need to be informed about what we are dealing with first.

We can’t just have our students outsourcing their thinking capabilities to generative AI models because those skills are fundamentally what make us human, especially when AI can either lie to us or be flawed itself.

We often take pride in what we can do with our minds, so let’s make sure we guide our children through these turbulent times.

Then comes action.

Regarding policy issues and participation, advocates can contact their local school districts by email. BSD can be reached at policyfeedback@bsd405.org.

You can also keep an eye out for your district’s policy schedule. BSD’s policy schedule is here.

Don’t forget to reach out to your School Boards! Community members can address the BSD School Board by signing up for public comments here.

Also, don’t be afraid to ask the teachers how they plan to use AI in their classrooms. We want our policies to ensure that the students come first, not the machines.

Be informed, and then raise your voice if you think your school district can do more!

Citations

Cardona, M., Rodríguez, R. J., Ishmael, K., & U.S. Department of Education. (2023). Artificial intelligence and the future of teaching and learning. https://www2.ed.gov/documents/ai-report/ai-report.pdf

How artificial intelligence in education is transforming classrooms. (2025, January 9). Southern Methodist University. https://learningsciences.smu.edu/blog/artificial-intelligence-in-education

Regona, M., Yigitcanlar, T., Xia, B., & Li, R. Y. M. (2022). Opportunities and adoption Challenges of AI in the construction industry: A PRISMA review. Journal of Open Innovation Technology Market and Complexity, 8(1), 45. https://doi.org/10.3390/joitmc8010045

Vallor, S. (2024). The AI mirror: How to Reclaim Our Humanity in an Age of Machine Thinking. Oxford University Press.